Kubernetes 101 : Troubleshooting "CrashLoopBackOff - host not found in resolver "kube-dns.kube-system.svc.cluster.local." -

We can see the "crashing" pod using the below command:

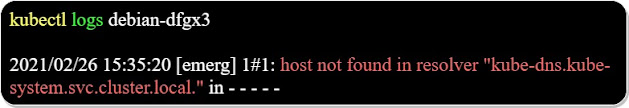

We can also look at the logs:

Because the pod is in a "CrashloopBackoff" state, we can't log into to investigate the cause of the error.

Checking the DNS pods:

The most logical cause would be that the pod can't contact the "kube-dns" service.

We can look into the "kube-dns" service using the below command:

We can also display the "core-dns" pods that provides the DNS service inside the kubernetes cluster using the below command:

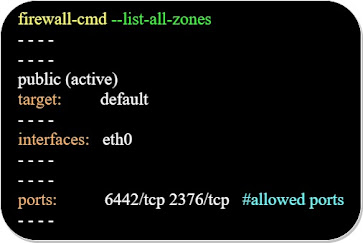

Usually the issue is that the UDP DNS packets get dropped before arriving to the cni0 bridge interface, because of a firewall rule on the node containing the "CrashLoopBack" pod.

Re-deploying the "CrashLoopBackOff" pod:

We can delete our "CrashLoopBackOff" pod, so a new one would be re-deployed to replace it:

We check the deployment of our new pod using the below command:

Comments