Kubernetes 101 : Pod Error : "CrashLoopBackOff "

Sometimes a the pods gets a "CrashLoopBackOff" status as we can see below:

example, the pod is stopped and it gets restarted by kubernetes over and over again.

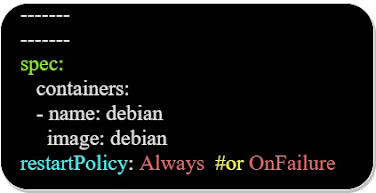

It happens because the "restart policy" of the pod is set to "Always" or "OnFailure" as we can see in the Yaml configuration file below:

kubernetes will try to restart the "crashing" pod, increasing the delay between the restarts, and you will see "CrashLoopbackOff" status when executing the "kubectl get pods" command.

To investigate the reason behind the "CrashLoopbackOff" status, we can use the below command:

To avoid that we could execute a command in the Pod that will keep it from exiting as below, in the Yaml configuration file:

Comments